When Objects Are Partially Visible: A Smarter Approach to Recognition

You’re walking down a crowded street and think you recognize someone—just a glimpse of a face, partly hidden behind others. Humans are remarkably good at this: we fill in the gaps. AI, however, struggles. Modern vision systems work well when objects are fully visible, but once something is severely occluded — behind a tree, bushes, under camouflage, or behind objects — their performance quickly drops.

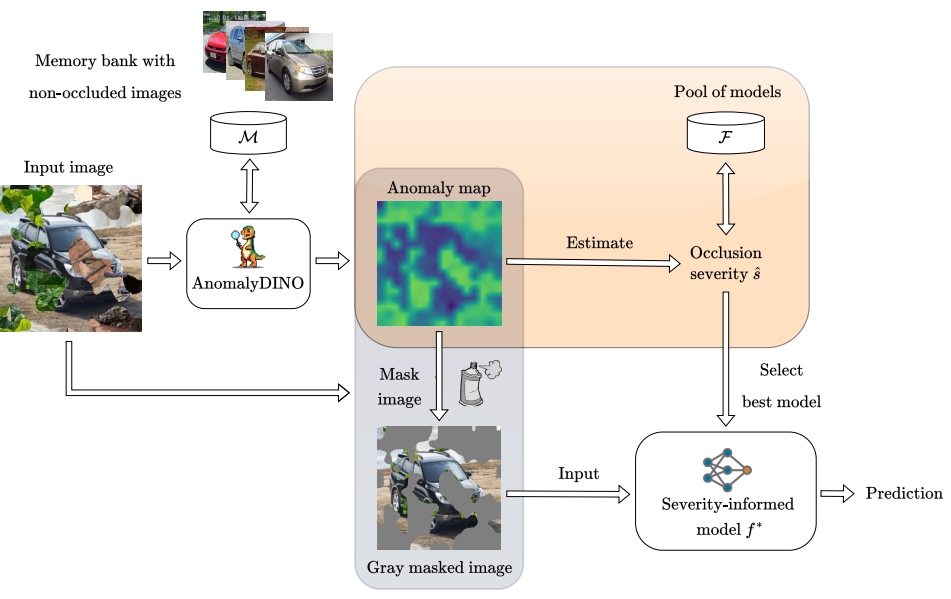

In our work on OASIC, we take a different perspective. Instead of forcing a single model to handle all cases, we first ask: how much of the object is actually visible? A system that sees almost everything should rely on fine details, while one that sees only fragments must fall back on coarse, robust cues. By estimating the severity of occlusion, filtering out distracting patterns, and selecting a classifier tailored to that situation, the system adapts its strategy.

This simple shift — matching perception to visibility — turns out to matter. In real-world settings like autonomous driving or robotics, objects are rarely fully visible. Teaching AI not just to see, but to understand how well it sees, is a small but important step toward making it work reliably in the messy, occluded world we actually live in.